In the digital era, health is no longer just about wellness—it’s about performance, optics, and identity. Two recent Netflix documentaries, The Game Changers and Untold: The Liver King, serve as cultural artifacts of a rising genre: influencer-fueled fitness propaganda wrapped in moral theater and masculine branding.

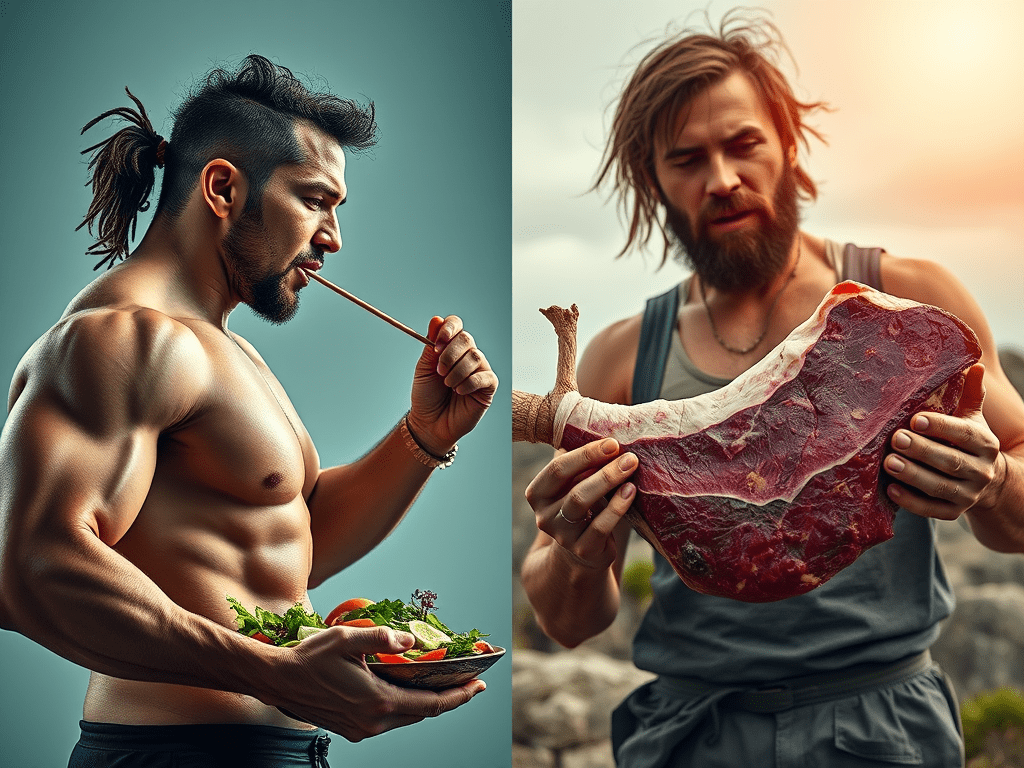

The Game Changers promotes a plant-based diet as not only an ethical choice, but as a gateway to elite athleticism, virility, and moral superiority. It uses cinematic flair, celebrity cameos, and pseudoscientific claims to repackage veganism as a Bro Lifestyle—a body-hacking shortcut to strength, stamina, and environmental salvation. Meanwhile, Untold: The Liver King profiles Brian Johnson, a self-styled “Ancestral Living” guru who gained millions of followers by promoting a raw-organ-meat, shirtless-in-the-woods routine before being exposed for secretly spending over $10,000 a month on performance-enhancing drugs.

Despite their opposing diets—one vegan, one carnivore—both narratives follow a suspiciously similar script. They offer simplified solutions to complex problems, appeal to masculine insecurity, and promise transcendence through aesthetics, all while playing fast and loose with science. Their real power lies not in evidence, but in storytelling—stories that market identity, exploit fears, and seduce with cinematic emotion.

This style of rhetoric, often called “Bro Science,” thrives in an age of algorithmic truth, where virality trumps validity. In this environment, influencer-driven wellness culture doesn’t just ignore science—it weaponizes it, bending facts to serve a brand. The result is a cultural climate where image, ideology, and emotional resonance increasingly matter more than data or critical thinking.

Assignment:

Write a well-argued, 1,700 word essay that analyzes The Game Changers and Untold: The Liver King as case studies in rhetorical manipulation, identity-based marketing, and the collapse of evidence-based discourse. In your essay, argue that the success of these Bro influencers lies not in their scientific credibility, but in their emotional, aesthetic, and ideological appeal.

You must compare the rhetorical strategies used in both documentaries and analyze the cultural implications of how masculinity is rebranded, how virtue is commodified, and how fallacious reasoning is normalized in the guise of motivation and self-improvement.

Your essay should address the following:

- Rhetorical Strategy – How do both documentaries use visual storytelling, celebrity testimony, repetition, and emotional appeals to persuade the viewer?

- Logical Fallacies – Identify and critique examples of cherry-picked science, false cause arguments, appeals to authority, or false dichotomies in each film.

- Branding Masculinity – How do the documentaries construct competing visions of the “ideal male”? What do they promise men, and what fears do they exploit?

- Collapse of Evidence-Based Thinking – Situate these documentaries in a larger cultural moment. Why do identity-driven narratives flourish in a time of disinformation, algorithmic echo chambers, and a crisis of expertise?

Style, Structure, and Submission

- Your essay must include a thesis with mapping components, clear topic sentences, and evidence-based analysis.

- You may write in a formal academic tone or use a more critical/cultural studies voice with vivid prose—as long as your argument is coherent, supported, and original.

- Use MLA style consistently.Final draft due: [Insert Date]

Sample Thesis Statements (with Mapping Components)

1.

While The Game Changers promotes lentils and The Liver King pushes liver, both documentaries peddle the same myth: that aesthetic transformation equals virtue. Through emotionally manipulative storytelling, logical fallacies disguised as science, rebranded masculine identities, and algorithmically engineered messaging, these films reveal the dangerous collapse of evidence-based thinking in modern wellness culture.

2.

The success of The Game Changers and Untold: The Liver King lies not in their nutritional claims but in their weaponization of narrative. Both films rely on emotionally loaded visuals, performative masculinity, fallacious scientific rhetoric, and identity-driven marketing to sell a fantasy of bodily perfection that exploits insecurity and bypasses rational analysis.

3.

By glamorizing extreme lifestyle choices through visual spectacle, moral branding, and rhetorical sleight-of-hand, The Game Changers and Untold: The Liver King reveal a disturbing cultural trend: the replacement of scientific rigor with personal mythologies, the commodification of authenticity, and the rise of Bro Science as a post-truth performance of health.

Sample Outline

I. Introduction

- Hook: The modern Bro doesn’t just lift—he converts.

- Brief overview of both documentaries and their appeal

- Thesis statement with four components:

- Emotional storytelling

- Logical fallacies

- Rebranded masculinity

- Decline of evidence-based thinking

II. The Emotional Power of Narrative

- Use of cinematic techniques, voice-over, editing, and transformation arcs

- Celebrity endorsements (e.g., Arnold, athletes, Johnson himself)

- Case study: how emotion trumps empirical data in both documentaries

III. The Rhetoric of Fallacy

- The Game Changers: cherry-picking studies, false cause arguments

- Liver King: appeal to nature, denial of PEDs, appeal to “ancestral purity”

- How fallacies are disguised through slick production and confidence

IV. Masculinity as Lifestyle Branding

- Compare how each documentary rebrands masculinity (lean vegan warrior vs. raw primal alpha)

- Analyze underlying fears being addressed: weakness, softness, irrelevance

- How “virtue” (animal ethics vs. authenticity) becomes a selling point for muscle aesthetics

V. The Cultural Crisis of Truth and Expertise

- Rise of influencer health culture amid distrust in traditional institutions

- The algorithm as echo chamber: content tailored to belief, not inquiry

- Bro Science as the new gospel in the post-truth digital age

VI. Conclusion

- Recap major points

- Reflect on the danger of narratives that bypass critical thought

- Call to rethink how we engage with health media and influencers in the age of viral propaganda