Desecrated Enchantment

noun

Desecrated Enchantment names the condition in which art loses its power to surprise, unsettle, and transform because the conditions of discovery have been stripped of mystery and risk. What was once encountered through chance, patience, and private intuition is now delivered through systems optimized for efficiency, prediction, and profit. In this state, art no longer feels like a gift or a revelation; it arrives pre-framed as a recommendation, a product, a data point. The sacred quality of discovery—its capacity to enlarge the self—is replaced by frictionless consumption, where engagement is shallow and interchangeable. Enchantment is not destroyed outright; it is trivialized, flattened, and repurposed as a sales mechanism, leaving the viewer informed but untouched.

***

I was half-asleep one late afternoon in the summer of 1987, Radio Shack clock radio humming beside the bed, tuned to KUSF 90.3, when a song slipped into my dream like a benediction. It felt less broadcast than bestowed—something angelic, hovering just long enough to stir my stomach before pulling away. I snapped awake as the DJ rattled off the title and artist at warp speed. All I caught were two words. I scribbled them down like a castaway marking driftwood: Blue and Bush. This was pre-internet purgatory—no playlists, no archives, no digital mercy. It never occurred to me to call the station. My girlfriend phoned. I got distracted. And then the dread set in: the certainty that I had brushed against something exquisite and would never touch it again. Six months later, redemption arrived in a Berkeley record store. The song was playing. I froze. The clerk smiled and said, “That’s ‘Symphony in Blue’ by Kate Bush.” I nearly wept with gratitude. Angels, confirmed.

That same year, my roommate Karl was prospecting in a used bookstore, pawing through shelves the way Gold Rush miners clawed at riverbeds. He struck literary gold when he pulled out The Life and Loves of a She-Devil by Fay Weldon. The book had a charge to it—dangerous, witty, alive. He sampled a page and was done for. Weldon’s aphoristic bite hooked him so completely that he devoured everything she’d written. No algorithm nudged him there. No listicle whispered “If you liked this…” It was instinct, chance, and a little magic conspiring to change a life.

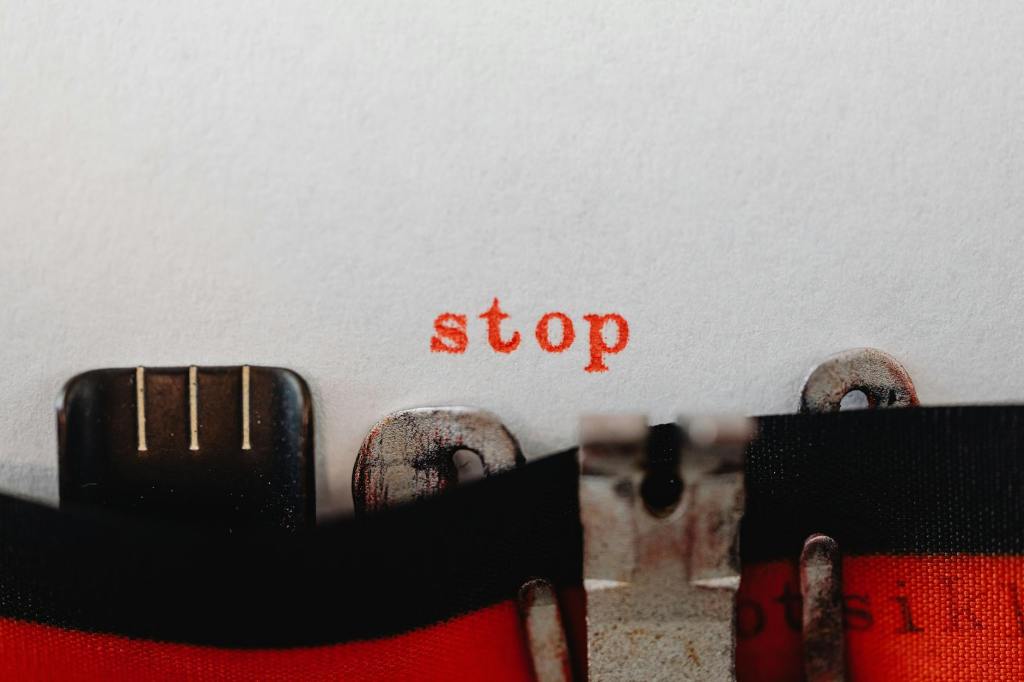

That’s how art used to arrive. It found you. It blindsided you. Life in the pre-algorithm age felt wider, riskier, more enchanted. Then came the shrink ray. Algorithms collapsed the universe into manageable corridors, wrapped us in a padded cocoon of what the tech lords decided counted as “taste.” According to Kyle Chayka, we no longer cultivate taste so much as receive it, pre-chewed, as algorithmic wallpaper. And when taste is outsourced, something essential withers. Taste isn’t virtue signaling for parasocial acquaintances; it’s private, intimate, sometimes sacred. In the hands of algorithms, it becomes profane—associative, predictive, bloodless. Yes, algorithms are efficient. They can build you a playlist or a reading list in seconds. But the price is steep. Art stops feeling like enchantment and starts feeling like a pitch. Discovery becomes consumption. Wonder is desecrated.