I had no clue back then, but my tragic fashion choices as a young professor in the desert in the early ‘90s were the desperate impulses of a kid who’d missed his shot at feeling special and was clawing to reclaim a glory he’d fumbled away when he was a teenage bodybuilder. Flashback eight years: I was working a job loading parcels at UPS in Oakland, on a low-carb diet that shredded me down to the bone. I was this close to contending for the Mr. Teenage San Francisco title. With a perfectly bronzed 180-pound frame, my clothes started hanging off me like a bad costume. That meant one thing: new wardrobe. Enter a fitting room at a Pleasanton mall, where I was trying on pants behind gauzy curtains when I overheard two attractive young women debating who should ask me out. Their voices escalated, full of hunger and competition, as if I was the last slice of pizza at a frat party. I pictured them throwing down on the store carpet, pulling hair and clawing at each other’s throats, all for the privilege of walking out with the human trophy that was me.

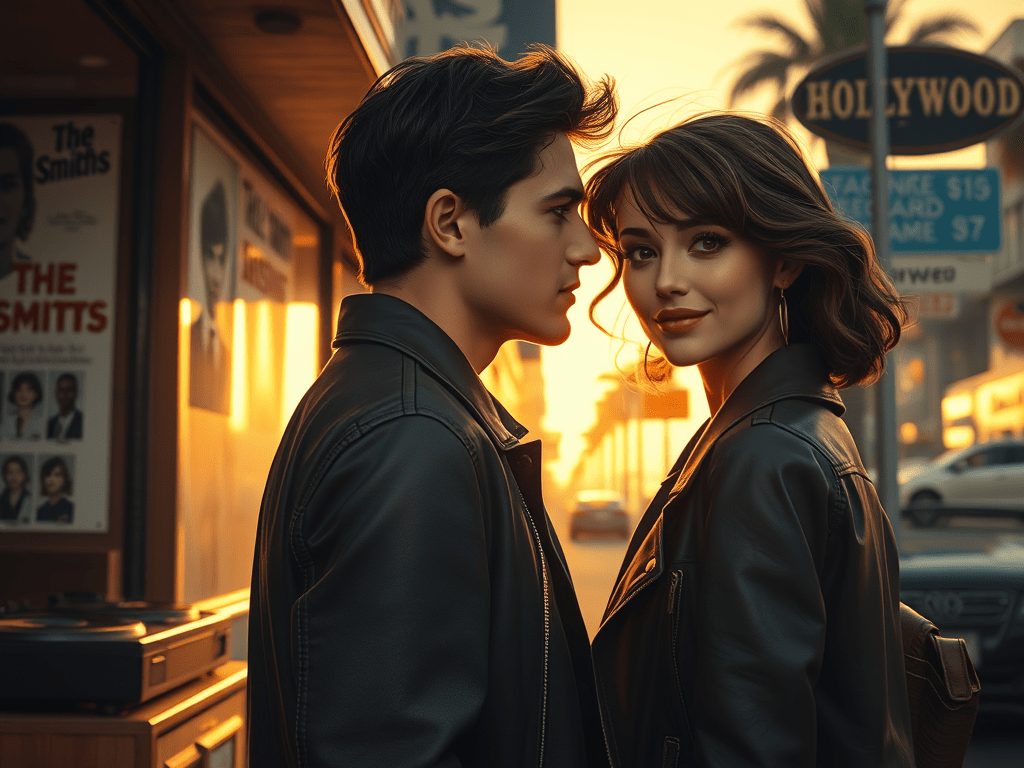

It was the golden moment I’d always dreamed of, my chance to bask in the attention and seize my shot at feeling like a demigod. So, what did I do? I froze like a deer in headlights, slapping on a look of such exaggerated indifference it was like laying out a welcome mat that said “Stay Away.” They took one look at my aloof facade and staggered off, probably mumbling about how stuck-up I seemed. But here’s the truth: I wasn’t a man full of myself—I was a coward hiding behind muscle armor.

For a short, fleeting period—from my mid-teens to early twenties—I was the kind of guy who could’ve sent Cosmopolitan’s “Bachelor of the Month” candidates sobbing into their pillows. But my personality was still crawling in the shallow end of the pool while my body was busy competing for gold medals. I had sculpted a physique that would make Greek gods nod in approval, but socially? I was like a houseplant that wilts if you talk too loudly. Gorgeous women practically threw themselves at me, and I responded with the warmth and enthusiasm of a mannequin. Behind all that bronzed, chiseled muscle was a scared little boy trapped in a fortress of self-doubt.

The frustration that consumed me as I stood there, watching those two retail employees squabble over me, was the same frustration that hit me like a truck a week later at the contest. I entered Mr. Teenage San Francisco as a “natural”—which is just a polite way of saying I didn’t juice and therefore shrank down to a point where I looked more like a wiry special-ops recruit than a bodybuilder. At six feet and 180 pounds, I had the lean, aesthetic “Frank Zane Look” just well enough to snag runner-up. But the guy who beat me was a golden-haired meathead pumped full of steroids and Medjool dates, which gave him muscles that looked inflated by a bike pump and a gut that seemed ready to explode from cramping.

The day after the contest, I was laid out at home, basking in the almost-victory and recovering from the Herculean effort of flexing through a nightmare lineup. Then the calls started pouring in. Strangers who’d gotten my number from the contest registry wanted me to model for their sketchy fitness magazines. Some sounded more like basement-dwelling creeps than actual photographers. I turned them down with all the enthusiasm of a nightclub bouncer dealing with fake IDs. But then one call stood out—a woman claiming to be an art student from UCSF, asking me to pose for her portfolio. Tempting, sure, but I politely declined.

Why? The reasons were as predictable as they were pathetic. First, I was drained from cutting down to 180 pounds and just wanted to curl up in a hole. Second, I was lazy. The thought of expending energy to meet a stranger sounded about as fun as a root canal. But the main reason? I was a professional neurotic, a certified worrywart who avoided human interaction like it was an airborne disease. The idea of meeting this mysterious woman in a San Francisco coffee shop filled me with a dread so profound that I felt like a cat eyeing a room full of rocking chairs.

By turning down those offers, I was throwing away the golden advice handed down in the Bodybuilder’s Bible, Arnold Schwarzenegger’s Arnold: The Education of a Bodybuilder. According to the Gospel of Arnold, I should’ve been leveraging my physique into acting gigs, business ventures, and political fame. But here’s the thing—I didn’t have Arnold’s larger-than-life charisma, his zest for adventure, or his shameless drive to turn everything into a money-making opportunity. While Arnold was out charming Hollywood and turning flexing into fortune, I was content to crawl under a rock and avoid all forms of adventure and new connections. If there had been a way to market my body without ever leaving my room, I would’ve been the undisputed king of the fitness world.

Instead, I took a different path—one paved with introversion and leading straight to a career as a college writing instructor in the California desert. By the time I hit twenty-seven, I was finally catching up socially—just in time to fantasize about all the chances I’d blown. Strutting around the desert in flamboyant outfits like a peacock trying to reclaim lost glory, I was determined to make up for all the opportunities I’d wasted, finally embracing the ridiculousness of who I’d become.