Even though I’ve been obsessed with watches for over twenty years, I arrived embarrassingly late to the G-Shock party. I didn’t plan the arrival. It felt more like this: I’m riding in the back seat of an Uber when the driver suddenly pulls up in front of a strange mansion glowing with neon light. The doors swing open. Inside are thousands of loud, jubilant G-Shock devotees who greet me like a long-lost cousin. Champagne appears. Confetti rains down. Someone hands me a microphone and asks for a testimonial.

I have no prepared remarks. But I can tell the truth.

For two decades I was perfectly happy collecting Seiko mechanical divers. They were my tribe. Yet somewhere in the back of my mind a particular watch kept whispering to me: the G-Shock Frogman. I had admired it on and off for over a decade. Amazingly, the same model was still available, so I finally ordered one from Japan. A watch that once would have cost me $400 now demanded $550, which is the sort of price inflation that causes a small twitch in the eyelid.

When the Frogman arrived, something strange happened.

I couldn’t take it off.

The watch felt uncannily right, as if some committee of Japanese engineers had secretly studied my personality and designed a wrist instrument to match it. It was heroic, absurdly tough, and far more accurate than my mechanical divers. Within weeks I stopped wearing the mechanicals altogether. Three of them quietly left the collection. Whether I’m taking a mechanical hiatus or attending their funeral remains unclear.

What I do know is that G-Shock has given my watch hobby a strange second life.

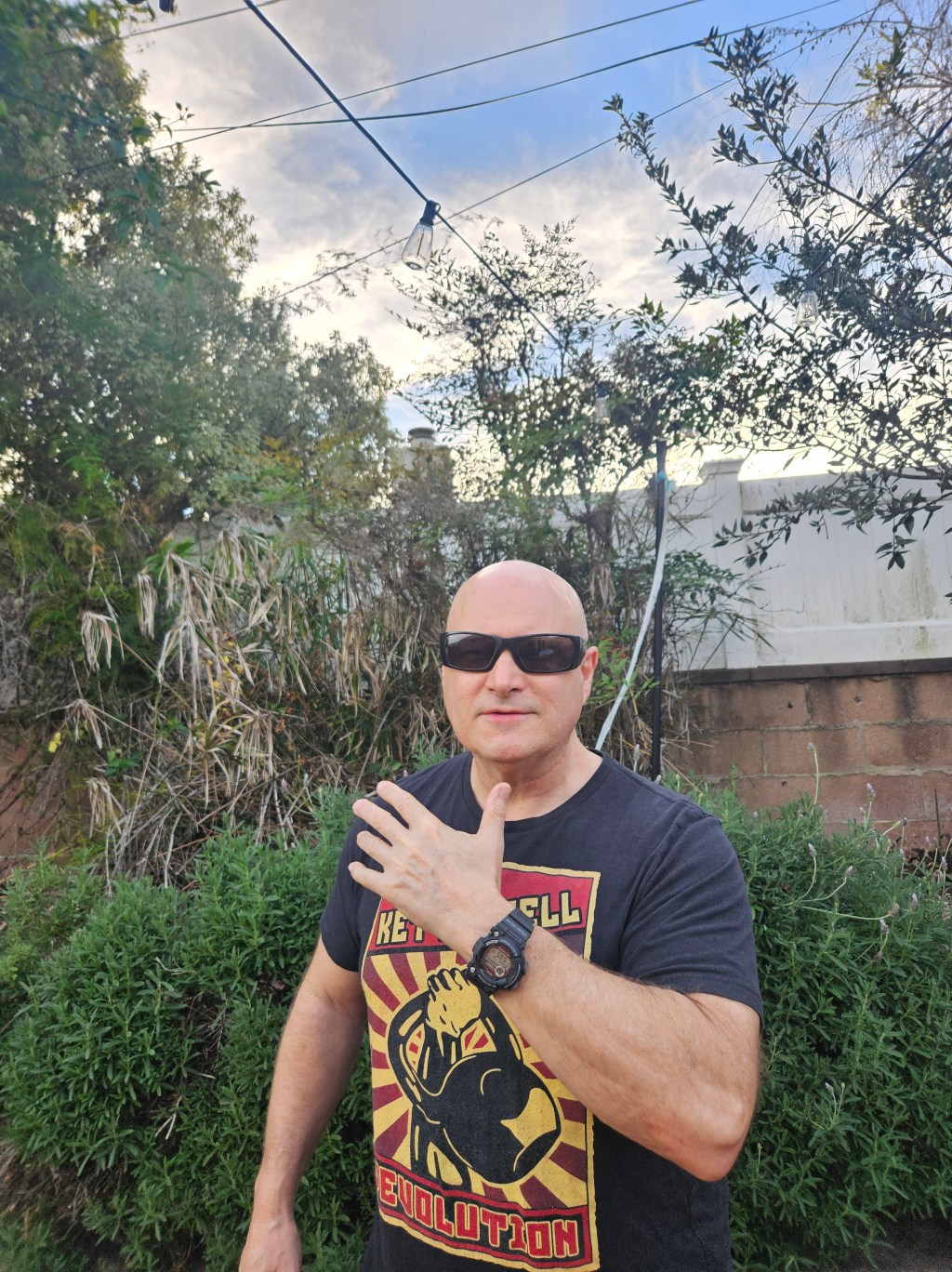

At the moment I own two of them: the Frogman and the GW-7900. Viewers on my YouTube channel insisted the 7900 deserved a proper name. A subscriber named Dave solved the problem immediately. “Call it the Tidemaster,” he said, since the watch tracks tides.

Perfect.

So now I have the Frogman and the Tidemaster. One cost me $550. The other cost $110.

Here’s the truth no luxury marketing department wants to hear: from a purely practical standpoint, the $110 Tidemaster is the better watch. Its numerals are larger, thicker, and darker. The contrast is superior. At night the backlight illuminates big bold digits that practically shout the time. The Frogman, by comparison, requires a small squint and a mild prayer.

In other words, the cheap watch wins the legibility contest.

A third watch is arriving next week: the G-Shock GW-6900. Like the 7900, it currently lacks a proper nickname. The watch has three round indicators above the display, which makes it look like a mildly deranged insect. I considered several possibilities. “Triple Graph” sounds like a geometry exam. “Militaire” sounds like a fragrance sold in an airport duty-free shop. So I’m going with the obvious choice:

The 3-Eyed Monster.

My goal is simple: settle into a stable Three-Watch G-Shock Trifecta. All three watches share the same genetic code—big heroic cases, atomic timekeeping, solar charging, digital displays, and rubber straps. That combination is my personal sweet spot.

Now we arrive at the temptation.

Many of you have suggested I should upgrade to the sapphire-crystal Frogman, a watch that lurks around the $1,000 mark. And believe me, that watch is occupying prime real estate in my brain. But I’d like to present a few rebuttals before I surrender to the credit card.

First, price. The Tidemaster and the 3-Eyed Monster cost about $110 each. Even the Frogman stayed under $600. Part of the joy of G-Shock is that it delivers durability, accuracy, and ridiculous hero aesthetics without the emotional trauma of a four-figure purchase. Once you push a G-Shock toward a thousand dollars, you start undermining the very spirit that makes the watch fun.

Second, technical overkill. The sapphire Frogman is loaded with features I will never use. Yes, the display is slightly more legible than my existing Frogman, but that problem is already solved by the Tidemaster and the 3-Eyed Monster.

Third, rotational anxiety. Two Frogmans would cancel each other out. I doubt I could sell my current Frogman—it has already fused itself to my identity like a stubborn barnacle.

Fourth, and perhaps most decisive, is age. If I were in my thirties or forties, building a large G-Shock collection might make sense. But I’ll be turning sixty-five this year. I don’t need a museum of watches. Between four Seiko mechanical divers, a quartz Seiko Tuna, and my three G-Shocks, I already have more watches than any reasonable human requires.

In fact, I could easily imagine a future where I own nothing but the three G-Shocks and feel perfectly content.

So there you have it.

Will temptation vanish completely? Of course not. Tonight I may dream about the sapphire Frogman. In a moment of midnight weakness I might even sleep-walk to my computer and hover over the Buy Now button.

But I like to believe that the reasonable part of my brain will prevail over the dopamine addict who lives next door.

At least that’s the story I’m telling myself.