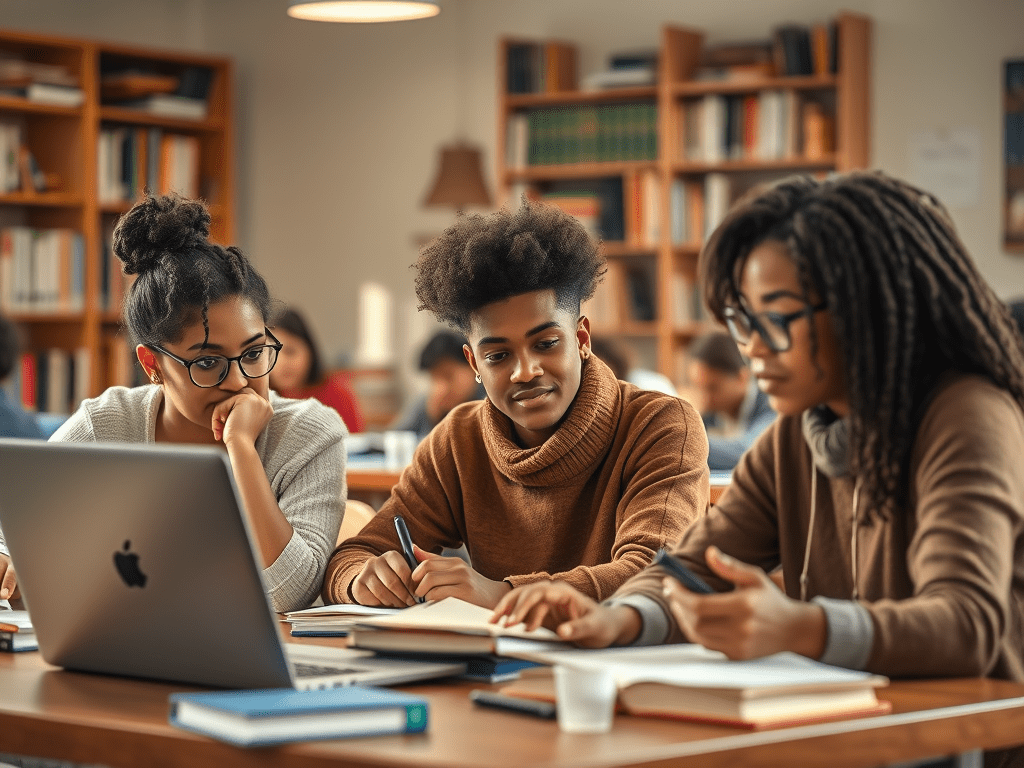

Yesterday my critical thinking students and I talked about the ways we could revise our original content with ChatGPT give it instructions and train this AI tool to go beyond its bland, surface-level writing style. I showed my students specific prompts that would train it to write in a persona:

“Rewrite the passage with acid wit.”

“Rewrite the passage with lucid, assured prose.”

“Rewrite the passage with mild academic language.”

“Rewrite the passage with overdone academic language.”

I showed the students my original paragraphs and ChatGPT’s versions of my sample arguments agreeing and disagreeing with Gustavo Arellano’s defense of cultural appropriation, and I said in the ChatGPT rewrites of my original there were linguistic constructions that were more witty, dramatic, stunning, and creative than I could do, and that to post these passages as my own would make me look good, but they wouldn’t be me. I would be misrepresenting myself, even though most of the world will be enhancing their writing like this in the near future.

I compared writing without ChatGPT to being a natural bodybuilder. Your muscles may not be as massive and dramatic as the guy on PEDS, but what you see is what you get. You’re the real you. In contrast, when you write with ChatGPT, you are a bodybuilder on PEDS. Your muscle-flex is eye-popping. You start doing the ChatGPT strut.

I gave this warning to the class: If you use ChatGPT a lot, as I have in the last year as I’m trying to figure out how I’m supposed to use it in my teaching, you can develop writer’s dysmorphia, the sense that your natural, non-ChatGPT writing is inadequate compared to the razzle-dazzle of ChatGPT’s steroid-like prose.

One student at this point disagreed with my awe of ChatGPT and my relatively low opinion of my own “natural” writing. She said, “Your original is better than the ChatGPT versions. Yours makes more sense to me, isn’t so hidden behind all the stylistic fluff, and contains an important sentence that ChatGPT omitted.”

I looked at the original, and I realized she was right. My prose wasn’t as fancy as ChatGPT’s but the passage about Gustavo Arellano’s essay defending cultural appropriation was more clear than the AI versions.

At this point, I shifted metaphors in describing ChatGPT. Whereas I began the class by saying that AI revisions are like giving steroids to a bodybuilder with body dysmorphia, now I was warning that ChatGPT can be like an abusive boyfriend or girlfriend. It wants to hijack our brains because the main objective of any technology is to dominate our lives. In the case of ChatGPT, this domination is sycophantic: It gives us false flattery, insinuates itself into our lives, and gradually suffocates us.

As an example, I told the students that I was getting burned out using ChatGPT, and I was excited to write non-ChatGPT posts on my blog, and to live in a space where my mind could breathe the fresh air apart from ChatGPT’s presence.

I wanted to see how ChatGPT would react to my plan to write non-ChatGPT posts, and ChatGPT seemed to get scared. It started giving me all of these suggestions to help me implement my non-ChatGPT plan. I said back to ChatGPT, “I can’t use your suggestions or plans or anything because the whole point is to live in the non-ChatGPT Zone.” I then closed my ChatGPT tab.

I concluded by telling my students that we need to reach a point where ChatGPT is a tool like Windows and Google Docs, but as soon as we become addicted to it, it’s an abusive platform. At that point, we need to use some self-agency and distance ourselves from it.