Let’s start with this uncomfortable truth: you’re living through a civilization-level rebrand.

Your world is being reshaped—not gradually, but violently, by algorithms and digital prosthetics designed to make your life easier, faster, smoother… and emptier. The disruption didn’t knock politely. It kicked the damn door in. And now, whether you realize it or not, you’re standing in the debris, trying to figure out what part of your life still belongs to you.

Take your education. Once upon a time, college was where minds were forged—through long nights, terrible drafts, humiliating feedback, and the occasional breakthrough that made it all worth it. Today? Let’s be honest. Higher ed is starting to look like an AI-driven Mad Libs exercise.

Some of you are already doing it: you plug in a prompt, paste the results, and hit submit. What you turn in is technically fine—spelled correctly, structurally intact, coherent enough to pass. And your professors? We’re grading these Franken-essays on caffeine and resignation, knowing full well that originality has been replaced by passable mimicry.

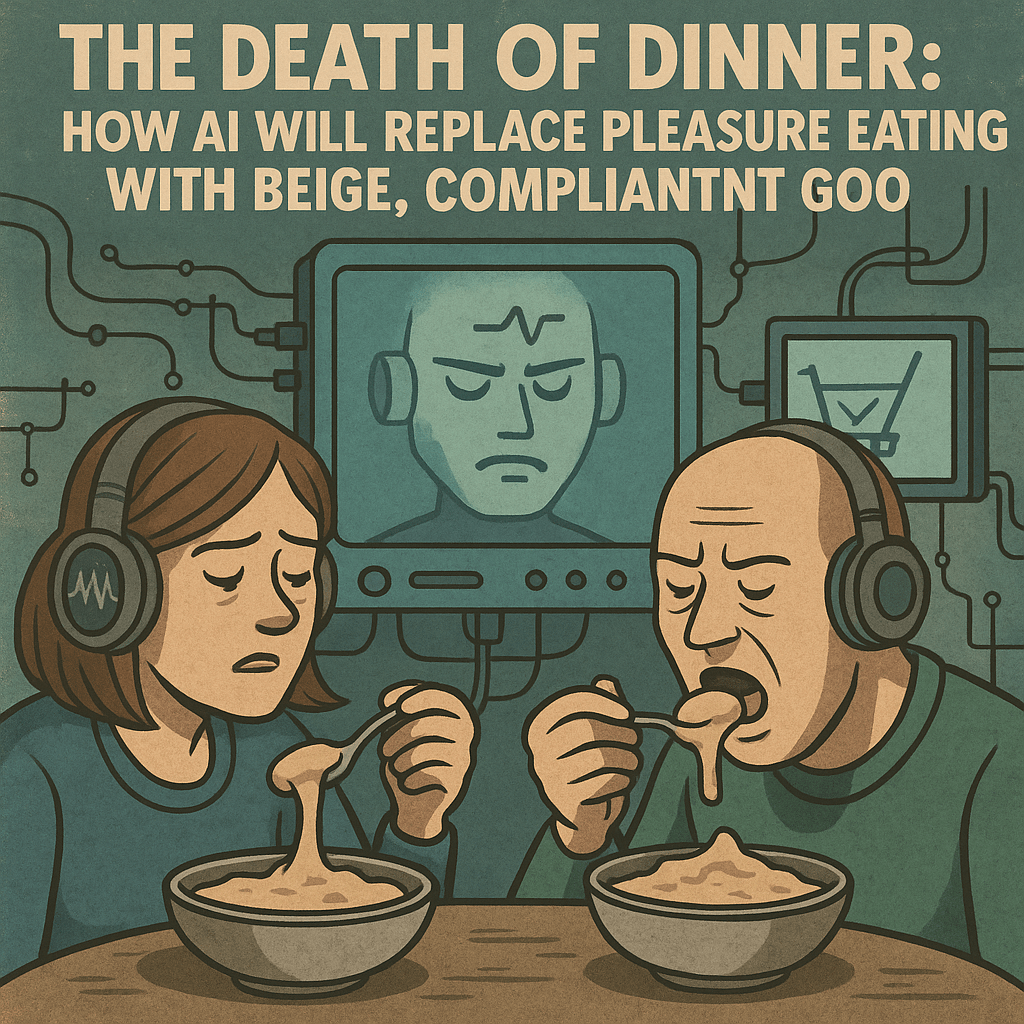

And it’s not just school. Out in the so-called “real world,” companies are churning out bloated, tone-deaf AI memos—soulless prose that reads like it was written by a robot with performance anxiety. Streaming services are pumping out shows written by predictive text. Whole industries are feeding you content that’s technically correct but spiritually dead.

You are surrounded by polished mediocrity.

But wait, we’re not just outsourcing our minds—we’re outsourcing our bodies, too. GLP-1 drugs like Ozempic are reshaping what it means to be “disciplined.” No more calorie counting. No more gym humiliation. You don’t change your habits. You inject your progress.

So what does that make you? You’re becoming someone new: someone we might call Ozempified. A user, not a builder. A reactor, not a responder. A person who runs on borrowed intelligence and pharmaceutical willpower. And it works. You’ll be thinner. You’ll be productive. You’ll even succeed—on paper.

But not as a human being.

You risk becoming what the gaming world calls a Non-Player Character (NPC)—a background figure, a functionary, a placeholder in your own life. You’ll do your job. You’ll attend your Zoom meetings. You’ll fill out your forms and tap your apps and check your likes. But you won’t have agency. You won’t have fingerprints on anything real.

You’ll be living on autopilot, inside someone else’s system.

So here’s the choice—and yes, it is a choice: You can be an NPC. Or you can be an Architect.

The Architect doesn’t react. The Architect designs. They choose discomfort over sedation. They delay gratification. They don’t look for applause—they build systems that outlast feelings, trends, and cheap dopamine tricks.

Where others scroll, the Architect shapes.

Where others echo, they invent.

Where others obey prompts, they write the code.

Their values aren’t crowdsourced. Their discipline isn’t random. It’s engineered. They are not ruled by algorithm or panic. Their satisfaction comes not from feedback loops, but from the knowledge that they are building something only they could build.

So yes, this class will ask more of you than typing a prompt and letting the machine do the rest. It will demand thought, effort, revision, frustration, clarity, and eventually—agency.

Because in the age of Ozempification, becoming an Architect isn’t a flex—it’s a survival strategy.

There is no salvation in a life run on autopilot.

You’re here. So start building.